Guidelines for online training best practice integrity measures.PDF

Foreword

This white paper provides, authors of units of competency, industry representatives, regulators, policy writers and Registered Training Organisations (RTO’s) an insight into the online integrity measures that MUST be in place to meet compliance with the Standards for Registered Training Organisations 2015.

Understanding the best practiced online integrity measures in this white paper will assist:

Authors of units of competency to reduce the likelihood of accidently restricting the ability for RTO’s to effectively use the online mode of delivery.

Industry Representatives to boost their confidence that learning outcomes can be achieved from online mode of delivery.

Regulators & policy writers, at a state or territory level to specify aspects of online integrity as mandatory requirements for state-based, approved training provider, conditions of approval.

RTO’s in the interpretation of the Standards for RTO’s 2015 in relation to the online training mode of delivery and assist with training and assessment strategy development and compliance.

Students to receive value for money with real learning outcomes and valuable skills and knowledge for employment via a flexible, effective and efficient online mode of delivery

Disclosure: All of the integrity measures mentioned in this white paper including, enrolment, training, and assessment (including demonstration of skills in simulated online environments and serious games), were developed for several units of competency using the Enrolo learning management system (LMS). These unit of competency were audited by ASQA, and successfully added to an RTO’s scope for distance learning via asynchronous and synchronous, 100% online learning mode of delivery.

Disclaimer: Online training is a rapidly evolving mode of delivery and any information provided in this white paper are the opinion of the authors and are open to interpretation and are not to be relied on for compliance purposes.

Contents

Australian Accredited online training Best practice integrity measures WHITE PAPER.. 1

Foreword. 1

Background. 4

The Future of Online Learning. 5

Benefits of high integrity online training. 5

High integrity online training costs. 6

Autonomy to Author Training and Assessment is Essential 7

Online Integrity Measures. 7

- Confirming Student Identity. 7

1.1. Valid Photo Identification. 8

1.2. Unique Student Identification. 8

1.3. Website with HTTPS Security. 8

1.4. Unique username. 8

1.5 Secure Password. 8

1.6 Identification during phone support 8

1.7 Terms and Conditions. 9

2 Assessing Student Competency. 9

2.1 Free text 9

2.2 Video Demonstration – 9

2.3 Lock out – 9

2.4 Multi choice wrong ratio. 9

2.5 Not given answer – 9

2.6 No direct answer link – 9

2.7 Random questions. 9

2.8 Question banks. 10

2.9 Prevent Concurrent viewing of Learning material and Assessment 10

2.10 Progress through the assessment is clearly indicated – complying with the fairness guidelines of the Standard’s for RTO’s. 10

3 Signing Off of Student Competency. 10

3.1 Use of qualified trainers – 10

3.2 Trainer Roster. 10

3.3 Trainer availability. 10

3.4 Human validation. 10

4 Minimising the Potential for Fraudulent Activity. 10

4.1 Capture the student’s IP address – 11

4.2 A system for monitoring cheating. 11

4.3 Student name fields must be locked. 11

4.5 Must be able to intervene. 11

4.6 Warn about fraudulent 11

4.7 Ability to report suspicious activity. 11

4.8 Deliver training via SSL.. 11

4.9 Agree to terms and conditions. 11

4.10 Statutory Declaration. 11

5 Course Content 11

5.1 Volume of Learning. 11

5.1.1 Minimum learning time. 12

5.1.2 LMS capability to manage time. 12

5.1.3 Active learning. 12

6 Demonstration of skills in online simulated environments. 12

7 Issuing Statements of Attainment 12

7.1 No Name change. 13

7.2 Unique ID.. 13

7.3 Watermark. 13

7.4 Locked PDF.. 13

8 Learning Management System Data Capture Requirements. 13

8.2 Number of attempts. 13

8.3 Result of all attempts. 13

8.4 Data base time stamp of data – 13

9 Auditing Approach for Online. 14

10 Feedback welcome and appreciated. 15

Background

In response to the Covid19 pandemic restrictions, there has been an unprecedented move by traditional in-class training providers to “online training” mostly using classroom, live streaming, webinar technology. As Registered Training Organisations (RTO’s) progress to more sophisticated self-paced learning presentations and online assessments, many RTO’s are either; unaware of the online integrity requirements or, in some cases are deliberately not implementing them to cut costs and compete with the lowest cost, non-compliant providers already in the market. In the meantime, following a strategic review into online training, ASQA are in a better position to audit these low integrity providers to help level the playing field for serious online training providers.

Many employers have experienced lack of knowledge and poor skills form students who have gained qualifications from low-integrity, low-cost, online training (whereby statements of attainment can be obtained by students in a matter of minutes by simply clicking without reading or engaging with course material, or randomly guessing until they pass an assessment that is automatically marked by a computer) and as a result may mistrust online qualifications.

Training providers that are cutting corners with online training are leading educators in a race to the bottom in terms of poor quality of training and assessment, and ultimately undermining the student’s value for money. The result of lack of integrity is not only poor learning outcomes and inadequate training, leading to inefficiencies or dangerous practices in the workplace, but also damage to the reputation of the online or the ‘e-learning’ industry. This is also putting the RTO at risk of compliance breaches with ASQA who are fully aware of online integrity requirements such as those outlined in this whitepaper.

Sophisticated online training has much to offer the training sector including providing better access for remote and regional communities, and better flexibility for students, who may be working, studying, or parenting at home. In many cases high integrity online training can provide better learning outcomes through the use of engaging multi-media technologies such as Scenario Based Training Interactives and Serious Games. Another advantage of online training for students is that it can be studied at the learner’s own pace and focus on areas they are having comprehension difficulties without holding up the whole class. High integrity online training allows students to have as much access to qualified trainers as they need, compared with in-class training where one-on-one contact is limited. High integrity online training where data is properly captured in an LMS can also improve evidence of training and assessment.

To date there has been little guidance from authors of the units of competency for online integrity who maintain that it is up to RTO’s to determine the mode of delivery of their training and self-determine how to achieve the learning and assessment outcomes of a unit of competency via their training and assessment strategy. It is also assumed that ASQA will audit against the Standards for Registered Training Organisations (RTOs) 2015 to ensure integrity rather than adding online integrity measures as a requirement to units of competency. However, the Standards for RTO’s 2015 do not provide implicit or obvious guidance for new online providers to ensure that their online training has high integrity. Rather, the requirements for online integrity fall under the Standards for Registered Training Organisations 2015 Clauses 1.8 – 1.12 Conduct effective assessment including principles of assessment (Fairness, flexibility, Validity and reliability) and rules of evidence (Validity, Sufficiency, Authenticity and Currency). To be compliant RTO’s must consider the integrity measures outlined in this white paper in that context.

There are examples of regulators (e.g. state and territory regulatory authorities) stipulating certain capability via conditions of approval to deliver minimum integrity measures, (many as outlined in this white paper), in order to be a state based approved provider. This may have been in response to ASQA not previously ranking online integrity as a high risk or not fully auditing all online providers courses for regulatory completeness. However, ASQA has recently conducted a strategic review into online training and is aware of and taking action on the integrity issues highlighted in this white paper.

In the past some of the Industry Skills Councils have actively added requirements into units of competency to try to exclude the online mode of delivery. The industry representatives on these skills councils may have had members with bad experience with staff who completed low integrity online training, and they may have limited understanding of the availability of high integrity online technologies and the capability of technology to deliver high quality learning outcomes. On the other hand, some skills councils have removed challenging aspects of the training unit because they incorrectly believed that online integrity was overly difficult to achieve online (such as demonstration of skills in a simulated industry environment) and added to cost of delivery and elevated price. This has resulted in units of competency that deliver poor learning outcome but can easily be delivered online by low integrity providers at low cost.

In March 2022 ASQA was nominated as the ‘National Training Package Assurance Body’, and moving forward, armed with fresh insights into online integrity issues from the strategic review into online training, the inconsistency between how the units of competency are being authored vs how integrity is managed, will be addressed. ASQA will also be able to act against low integrity online providers, establishing a more level playing field for those that are taking integrity seriously via the online mode of delivery.

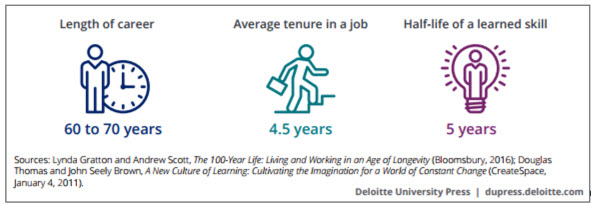

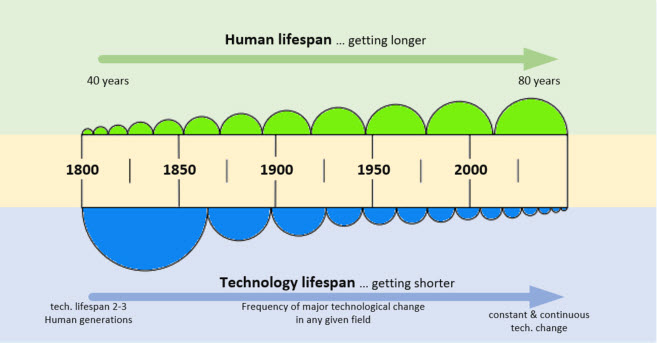

The Future of Online Learning

Automation in the training profession is continuing to advance at an ever-increasing speed heading to exponential growth. Examples include interactive videos, computer auto marking, interactive video assessment and virtual reality scenarios, Scenario Based Training Interactives (SBTI’s) and serious games for the demonstration of skills. The experience API is also allowing online training to move beyond the boundaries of formally structured content development and the classroom.

Many qualifications that are taught using sophisticated online training methods can already deliver the same, if not better learning outcomes than in-class training. Since online training innovation is growing at an exponential rate it is advantageous if high integrity online training providers are allowed to author their own learning materials to keep up with these changes.

Benefits of high integrity online training

The benefits of high integrity online training include but are not limited to;

- Improved accessibility, particularly for students in remote or regional areas or parents with young children.

- Students can learn in their own time and do not have to take time off work or study.

- Provides online experiences that are relatable, can trigger behavioural change, facilitate decision making and increase critical thinking skills.

- Provides understanding of the impact and consequence of their decisions and choices in a safe mode and gives them an approach to hone skills nada chive higher proficiency levels.

- Crating sticking learning experiences, facilities problem solving, provides guided exploration, and a safe practice zone to gain proficiency and mastery.

- A successful learning strategy because it can be designed to be: motivating, engaging, immersive, relevant and relatable, replicable, consistent, challenging, and rewarding.

- Reduced travel costs such as transport and parking during training and reduced carbon emissions.

- Greater consistency in terms of content delivered and the assessment method.

- Quicker response times to updating learning content resulting in more up to date learning resources.

- More empirical data can be collected for demonstrating competency and adherence to high integrity.

- Reduced administrative and non-value-adding work such as marking quizzes or issuing certificates.

- Less monotonous tasks for trainers and more time spent with students that need their assistance.

- Reduced human error related to less reliance on monotonous and repetitive tasks.

- Learners can work at their own pace and concentrate on areas they do not understand.

- Students can spend as much time with qualified trainers as they need.

- Students can access learning material ongoing and can also be provided with updates to the learning material.

- Reduced room hire costs which are however, offset by online tools, online delivery, technical support and online course content development.

High integrity online training costs

There is a misperception with some training providers that online training is a way to cut costs such as eliminating trainers and classrooms and automating everything including assessment with a computer to increase profits. However, if a training organisation is meeting the high integrity online measures outlined in this white paper and meeting the requirements of the Standards for RTO’s 2015, despite the reduced cost of classrooms, the cost of delivering effective online training is equal to if not greater than in-class training. This is because not only do all of the existing requirements that need to be in place for in-class training such as; qualified trainers and assessors, mapping, internal audits, continuous improvement registers and so on, still need to be in place for online, but in addition to these costs, online providers need to purchase and maintain: an online learning environment (LMS), student management system (SMS) and build digital online learning material and robust digital assessments. There is also a much higher requirement for one-on-one engagement between students and trainers and assessors when students need assistance with comprehension and get locked out of an assessment. This one-on-one approach dramatically changes the learning landscape and massively increases costs. This, is only the case if the design of the learning has high integrity which forces the student to contact the trainer when needed such as being locked out from continuing. This is a paradigm shift where the learning management system does most of the training and the trainer and assessor assists those that need help as much as they need, so much so in this context they may also be considered a tutor.

Whilst some RTO’s believe that an online slide show with voice over followed by an online quiz requiring a student to guess a few true / false questions, that are automatically marked by a computer, meets the Standards for RTOS’ 2015, effective and engaging online training includes; videos, gamification, interactive scenarios, simulated environments and animations with voice overs and challenging exercises. Effective assessment requires students to be locked out from excessive guessing, essays, and free text responses as well as video demonstration of skills or offline activities. If online training contains these kinds of elements, then it can cost up to AUD$30,000 per hour of e-learning output. Compare that to a power point presentation created for in-class training and high integrity online training providers are spending approximately $180,000 for a six-hour online course development vs a few thousand for an equivalent in-class presentation or even less for low integrity online courses.

Autonomy to Author Training and Assessment is Essential

From a training perspective, it is essential to realise that to achieve efficient and effective online learning, that the ability for a training provider to edit content and assessment is inseparable. This is because assessments are directly related to the learning material and to achieve accurate mapping to a unit of competency assessment questions must be written in such a way that may require regular edits to the content. Furthermore, if an assessment question is causing confusion or returning a high rate of incorrect answers, it may be the case that the content needs to be edited rather than the assessment question. Another issue that can occur with mandatory content provided by regulators is that if the government bodies are renamed or restructured, often they lose funding to maintain content and this content is then not able to be updated for name or content changes by training providers as they don’t have open-source access. Government bodies that attempt to provide learning material to training providers may face increased costs and struggle to keep up with the rapidly evolving innovation and this should be avoided. However, there have been some good examples of authorities providing effective videos that RTOS have incorporated into their learning material.

Rather than being overly prescriptive for state requirements an alternate approach that regulators can consider is to provide elements such as videos or interactive content that may be included by an online training provider as part of a course. A good example of this is the “Behind the bar RSA training video – Just one more” authored by the Office of Liquor and Gaming Regulation Queensland, who invite training providers to use the content in their courses royalty free.

A consideration for regulators providing such content is to provide open-source access to authored content so that training providers and modify it as needed.

A key take home for regulators risk assessment for online training is that low sale prices for online courses well below the average price being offered by others in the market, are a likely reflection of low expenditure in developing the content and therefore unlikely to meet the Standards for RTOs 2015. Very low online course sale prices that are below the cost of being able to pay a trainer to be involved may reflect the lack of students being forced to access trainers due to comprehension issues or excessive guessing.

Online Integrity Measures

RTOs developing online training must ensure that all the following measures are in place to meet the requirements of the Standards for RTO’s 2015.

1. Confirming Student Identity

Confirming student identify in an online setting is of paramount importance in reducing fraudulent activity, i.e. by ensuring that the person doing the training is the person obtaining the Statement of Attainment/Certificate. Fingerprint and face scans are becoming more prevalent however, until these are more widely accepted the following procedures and features are considered best practice to verify that a student, who is enrolled in an online course, is the person who also completes the course and receives the certification:

1.1. Valid Photo Identification

Students must provide via a secure (https) online website, a copy of current government-issued photo ID which is reviewed and accepted or rejected by trained staff. Details captured and verified must include the full name, date of birth, and contact details (including residential, valid email address, and contact phone number) of the student.

The residential address of the student must be validated. This can be improved using an online address validation service or address look up tool.

Students must not be allowed to change personal details themselves – they need to lodge a request and provide evidence, such as government-issued photo ID, marriage certificate, change of name certificate, etc. where required.

For video assessment, the valid photo ID should be displayed against a student account when videos are being assessed, or during video conferencing so trainers can ascertain that they are speaking with the student who has made the recording.

In many circumstances this procedure may exceed identification integrity taking place in classroom training environments.

1.2. Unique Student Identification

In Australia it is a government requirement for nationally recognised training, that before completing their enrolment students must obtain a Unique Student Identifier (USI) and the details must match their valid photo identification provided before they can be issued with their Statement of Attainment/Certificate (qualification).

- Website with HTTPS Security

Students must only access online courses through a https secure website that requires a login and password.

1.4. Unique username

Students must create their own unique username such as an email address

1.5 Secure Password

If an overly complex password is provided to the student then they will need to write it down and this reduces the security of the password so students must create a secure password themselves.

Students must be provided with best practice information regarding setting up and maintaining the security of their passwords.

Student password recovery must be via clicking a link to recover their password that sends them details on how to do this via their registered email. Passwords must not be able to be changed without the student logging into the account or providing the answer to a secret question if they have lost email access.

2 Assessing Student Competency

Assessments must be mapped to a unit of competency to demonstrate that all the required learning elements have been assessed.

In an online environment, assessments that are designed to provide a true reflection of the knowledge and skills that have been achieved are one method of proving competency has been gained. Offline activities can also be undertaken and witnessed by a suitably qualified supervisor and their accounts included for consideration.

For an RTO to be compliant in an online environment, (i.e. in order for assessments to demonstrate that a student has achieved understanding and competency), their online system must have the following integrity measures:

- Free text – Require at least 1 question in each assessment (ideally 25%) to be answered with free text sentences (ie at least 5 words or more, not a single word or number) allowing a proper critical response to questions.

- Video Demonstration – In the case of demonstration of skills, require video footage to be submitted to capture a student’s ability to demonstrate a task or skill.

- Lock out – If a student gets the same question wrong three (3) times they must be “locked out” and required to talk to the training organisation and if they need assistance, they must be referred to a qualified trainer ie rather than keep guessing until they get the question correct through a process of elimination.

- Multi choice wrong ratio – Multiple choice questions must have more wrong combinations than available attempts and designed so that students will be locked out before guessing through a process of elimination.

- Not given answer – Students must not be provided with the correct answer eg before or after attempting an assessment before they get the answer correct themselves. If re-attempting assessments, students must get the full range of options and not be shown which answers have already been attempted and marked as incorrect.

- No direct answer link – Students must not be provided with links from the question directly to the correct answers in the learning material.

- Random questions – The order of assessments must be randomised to reduce the likelihood of cheat sheets.

- Question banks -Because of the complexity of meeting mapping requirements if questions are randomised, banks of similar questions must be created, and questions randomly selected from those banks to ensure that the particular questions achieve the required mapping.

- Prevent Concurrent viewing of Learning material and Assessment The online system must ensure that students cannot have the learning material open in one window and the assessment in the other allowing the student to copy and paste answers without critical thinking. This needs to be in place for multiple tabs, multiple browsers on the one device or multiple logins across multiple devices.

- Progress through the assessment is clearly indicated – complying with the fairness guidelines of the Standard’s for RTO’s.

In contrast to assessments that comply with the above high integrity measures, assessments that are; 100% marked by a computer, predominantly multiple choice with unlimited elimination attempts or true/false by elimination with two attempts, provide no requirement or opportunity for a student to demonstrate their comprehension of the learning material. Whilst it may be argued that by eventually getting a question right though guessing is a form of learning, allowing students to keep attempting questions throughout and entire assessment until they get the correct answer, means the student does not even need to read the question or answer and does not deliver meaningful learning outcomes. Demonstrated competency through rigorous and auditable assessment, in combination with the above integrity measures, is considered an integral requirement for the demonstration of online learning outcomes.

3 Signing Off of Student Competency

Online training providers may try to cut corners and costs by using administration staff with no training qualifications to assist with training. To cut costs training providers may also reduce access by students to qualified trainers to very short periods. It is essential that qualified trainers are available to students in an online environment. These trainers may communicate with the students via:

- Telephone

- Email

- Live chat

- Webinars

- Video conferencing

To improve Integrity measures RTO’s must implement the following:

- Use of qualified trainers – for assisting with training support. All trainers employed by a training organisation must be on a training register.

- Trainer Roster – Total trainer hours must as a minimum reflect availability during normal office hours.

- Trainer availability – Trainers must be available at least during normal business hours and must assist learners requesting training within 30 minutes of a request.

- Human validation – An online statement of attainment must not be issued until the enrolment details confirmed and assessment and learning activities of the student have been reviewed by a qualified trainer. This sign off process must be demonstrated by data in the learning management system.

4 Minimising the Potential for Fraudulent Activity

RTO’s must ensure that the following integrity measures are in place to minimise the potential for fraudulent activity:

- Capture the student’s IP address – and monitor if bulk users are coming from the one IP.

- A system for monitoring cheating must be in place that flags when different students give the exact same free text answers to free text assessment questions.

- Student name fields must be locked so that these can only be changed by administration staff upon request and subsequent verification of the student’s identification.

- ‘Lock-out’ access – to an assessment after failing a set number of attempts of each question (3) and must speak with a qualified trainer to be unlocked if they do not know the answer.

- Must be able to intervene and make direct contact with the student if required.

- Warn about fraudulent activity – Notify students that certification may be voided if fraudulent information is provided or fraudulent activity is detected.

- Ability to report suspicious activity – The learning system must have the ability to identify and report immediately any suspicious activity by students undertaking the course to the appropriate authority.

- Deliver training via SSL – SSL to reduce third party fraudulent activity ie HTTPS at start of a domain..

- Agree to terms and conditions – Capture electronic evidence that the student has agreed to terms and conditions relating to not being assisted in any way during assessment and that they are the one that undertook and completed the training.

- Statutory Declaration – If adequate integrity measures are not in place, or a student has breached an integrity measure, students must sign and upload a witnessed statutory declaration that they are undertaking the training without assistance.

5 Course Content

5.1 Volume of Learning

The Australian Quality Framework says that “the volume of learning” includes guided learning, individual study, research, learning activities in the workplace and assessment activities. It could be argued that rather than applying this at a qualification level, the volume of learning should be applied at a unit of competency level and rather than minimum duration the relationship between the quality of the content being able to map to a rigorous assessment should be the deciding factor in assessing competency. As the AQF goes on to say:

“The duration of the delivery of the qualification may vary from the volume of learning specified for the qualification. Providers may offer the qualification in more or less time than the specified volume of learning, provided that delivery arrangements give students sufficient opportunity to achieve the learning outcomes for the qualification type, level and discipline.”

This could be applied to a competency rather than the whole qualification.

When looking at VET training, we need to remember that students come from hugely various backgrounds and experience, from Year 11 students undertaking a VETiS program to someone with a PhD who is looking for a career change to an older person with a wide-ranging life and work experience. This all affects how quickly they can become competent, not only based on their level of experience and in the skills they already have or need to learn, but their ability to undertake and comprehend the training in the first place.

While setting minimum durations based on the time a learner (who is new to the industry area) would be required to undertake supervised learning and assessment activities (ASQA report A review of issues relating to unduly short training page 15 has merit), the dilemma remains that some people undertaking formal study will already be to some degree competent and not require the same minimum duration.

In terms of auditing the appropriate volume of learning on a student-by-student basis, training providers using data from the AVETMISS enrolment should be able to demonstrate whether a student is new to the field and requires what could be considered as minimal learning hours, as opposed to someone who is requalifying and could reasonably be expected to complete a competency in a much shorter time frame. I.e. based on evaluating the needs of the student from the language, literacy and numeracy (LL&N) together with the AVETMISS enrolment data a training provider should be able to specify the expected or minimum volume of learning required. In a classroom this could be part of the learning plan. In an online environment, this tailored learning plan must be established and managed in a dynamic way, i.e. by having certain locks or minimum interactive learning hours completed before assessment is made available.

To improve course content Integrity measures authors must stipulate:

- Minimum learning time – Access to onlnline assessment should not be permitted until a minimum amount of learning that is determined on a student by student basis has been achieved

- LMS capability to manage time – The LMS must be able to manage the minimum online learning time limits and must also be able to record the amount of time spent in learning activities.

- Active learning – the LMS must be able to determine whether the learner is actively learning and stop the clock if they are not eg if a presentation plays for an hour can the student take a break and come back and have their learning record show one hour?

6 Demonstration of skills in online simulated environments

Some units of competency require the demonstration of skills in a simulated industry environment. With very sophisticated training and assessment strategies combined with advanced technological approaches such as Scenario Basted Training Interactives (SBTI), video capture and serious games this can be achieved however, regulators now know the difference between a valid implementation of these approaches and unsophisticated approaches including:

- has a simulated environment been constructed either; in the online scenario or, by the student in their physical environment, meeting the requirements of equipment needed as specified by the unit of competency or implementation guide such as printing out signage or physical equipment being present?

- Has the simulated environment been validated as adequate by a suitably qualified trainer/assessor and is there evidence of this?

- Does the student provide evidence of their skills in the simulated environment e.g. by submitting video evidence as compared to just a voice recording which would not?

- How is the video evidence checked for authenticity in terms of cheating or that the person in the submitted video is the student as enrolled with photo ID?

- Are gamified learning or assessment tools sophisticated enough such as serious games that deliver meaningful skills training or assessment or just gimmicks? I.e. is the logic and sophistication of the data collected in such games adequate to prove competency?

7 Issuing Statements of Attainment

Fraudulently copying or editing statements of attainment can be done no matter the mode of delivery eg in-class or online. However, If statements of attainment are digitally issued online, policy RTO’s must ensure that the following integrity measures are in place to minimise fraudulent activity:

- No Name change – The student must not be able change their name online and an administrator should only be permitted to do this after identifying the student and then sighting an updated form of photographic identification meeting the identification integrity requirements with the new name.

- Unique ID – The certificate must contain a unique number that is linked to the unique student username.

- Watermark – The certificate must have a background water mark with the unique details of the certificate, e.g. student name, date of birth, issue date, certificate number, signature of issuing authority, expiration date over the top of the watermark(s).

- Locked PDF – The certificate must be in a PDF format and the PDF must have security enabled that locks the PDF and prevents edits.

8 Learning Management System Data Capture Requirements

Without high granularity of data capture related to the digital learning environment, it is more difficult to demonstrate that integrity has been maintained. Some Learning Management Systems (LMS’s) may only record the course sections that have or have not been accessed and that assessments have or have not been successfully completed, i.e. without capturing the granularity of the data such as the actual responses given to pass the assessment or the number of assessments or total learning engagement time.

In order to achieve integrity, a LMS must be used that has the sophistication to not only have integrity features in place, but also be able to collect data for integrity and track student activity and progress through the learning material and assessments including;

- Content engagement – Record the amount of time that a student has meaningfully interacted with learning content,

- Number of attempts – Record how many attempts students have made for each assessment

- Result of all attempts – Capture the result of each attempt not just that a student at some point provide correct answers,

- Data base time stamp of data –the regulator may interrogate the formula in the learning management system for determination of duration of learning and confirm that it is not fraudulently adding hours. There needs to be detailed scrutiny and proof of validation of duration data by the online system

RTO’s must ensure that their learning management system used to deliver training online does so at a level of granularity that allows auditors to view every single piece of data submitted by the student in relation to assessment whether that submission is correct or not. Also that the number of attempts and evidence of lockout after eg 3 attempts can be show with data evidencing how the lockout was overturned and by whom. Most importantly that evidence of duration is and the way that is has been calculated is valid.

All the integrity measures mentioned in this white paper including, enrolment, training, and assessment (including demonstration of skills in simulated online environments and serious games), were developed for several units of competency using the Enrolo learning management system (LMS). These unit of competency were audited by ASQA, and successfully added to an RTO’s scope for distance learning via asynchronous and synchronous, 100% online learning mode of delivery.

9 Auditing Approach for Online

Student satisfaction scores for single units of competency delivered 100% online are not always a valid representation of the quality or integrity of online training. Student satisfaction of very poor-quality online learning and low integrity training and assessment may be very high. This is because students that need mandatory qualifications are often on a very short timeline, not interested in the quality of the learning but rather a short duration and will therefore give high satisfaction scores for cheap prices and short duration courses.

Because of the integrity issues highlighted above, asynchronous online training where learning and assessment is delivered in the absence of a trainer or assessor can be considered higher risk than e.g. a trainer delivering a lesion via a webinar.

The risk of training units can also be considered in terms of the amount of potential health risks to students or the community from inadequate training and the amount of regulatory oversight that exists in that industry sector.

Whilst a well written Training and Assessment Strategy (TAS) is the essential first point of reference during an audit of the validity of the online training the ONLY way to tell if the training and assessment strategy is being implemented in either the classroom or online is for the auditor to anonymously purchase the course, undertake the training and gain a statement of attainment. (mystery shop) During the process of obtaining the statement of attainment the auditor will check that the course:

- meets all of the items listed in this best practice document as well as the training and assessment strategy.

- Prevents the student guessing their way through the assessment, that they get locked out after three wrong attempts

- Allows the student to access meaningful and timely trainer support in accordance with the training and assessment strategy.

- Has valid learning material as defined by the standards for RTO’s 2015 i.e. whereby the student is challenged throughout and is given an opportunity to put their knowledge into practice prior to final assessment otherwise presentations are not considered part of learning duration.

- Online assessment and learning materials comply with the standards for RTO’s 2015 in relation to integrity, authenticity, reliability and fairness i.e. in relation to these best practice online integrity measures.

- Reported duration matches the actual course duration as undertaken by the auditor

- Does not have loopholes allowing shortcuts and confirm the impact on nonattendance on reported duration of training in the learning management system.

Because electronic data can be easily manipulated Auditors are more likely to ask for 100% of the data not samples of data and data collected during mystery shopping will be compared to what is being reported.

10 Feedback welcome and appreciated

We value feedback form industry, regulators, trainer/assessors, training organisations and students please let us know if you have any feedback in relation to this white paper so that we can consider it for our next update.

feedback@enrolo.com

Disclosure: All of the integrity measures mentioned in this white paper including, enrolment, training, and assessment (including demonstration of skills in simulated online environments and serious games), were developed for several units of competency using the Enrolo learning management system (LMS). These unit of competency were audited by ASQA, and successfully added to an RTO’s scope for distance learning via asynchronous and synchronous, 100% online learning mode of delivery.

Disclaimer: Online training is a rapidly evolving mode of delivery and any information provided in this white paper are the opinion of the authors and are open to interpretation and are not to be relied on for compliance purposes.

![]()